Is your AI responding safely?

Does it refuse harmful prompts? Does it hallucinate in ways that could hurt your users? Most teams don't test for this systematically.

QA & AI Safety

Developers ship fast and test rarely. In an AI product, that's no longer just a quality problem - it's a safety problem. We set up end-to-end testing for both your software and your AI layer.

2x

Testing layers - software and AI

0

Existing QA framework needed to get started

End-to-end

From unit tests to AI evals and monitoring

A New Problem

Traditional QA checks whether your software works. AI testing checks whether your AI behaves - safely, consistently, and within guardrails. These are different problems that need different tooling and different expertise.

Does it refuse harmful prompts? Does it hallucinate in ways that could hurt your users? Most teams don't test for this systematically.

Prompt injection is the new SQL injection. If you haven't tested for it, you haven't secured it.

Without evals and traces in place, a model update or prompt drift can silently break your product. You won't know until users complain.

What We Set Up

We build the complete testing infrastructure your product needs - both layers, from scratch or on top of what you already have.

Tried and tested. Fully automated.

Unit test framework

Comprehensive unit test coverage across your codebase with CI integration.

Browser and E2E automation

Automated end-to-end flows across browsers and devices using Playwright or equivalent.

Regression testing

Automated regression suites that run on every PR so nothing breaks silently.

Load and performance testing

Stress testing under real-world traffic conditions before you need it.

Continuous QA in CI/CD

Testing wired into your build pipeline so every deployment is gated on quality.

The layer most teams are missing.

AI guardrails

Input and output guardrails that prevent unsafe, off-topic, or harmful responses.

Prompt injection testing

Systematic testing of your AI's resistance to injection attacks and jailbreak attempts.

AI evals framework

Structured evaluation pipelines to measure and track AI output quality over time.

Tracing and monitoring

Full observability on your AI layer using LangSmith, LangFuse, or equivalent tooling.

Safety evals and fencing

Automated safety benchmarks that catch regressions in AI behaviour before they reach users.

Engagement Options

One-time setup or ongoing QA partnership - both options available.

One-Time

We build the framework. Your team runs it.

Ongoing Retainer

We own testing so your engineers own product.

From the Blog

AI Engineering

Vibes-based testing breaks in production. Here's the evaluation framework we use before deploying any LLM-powered feature.

Rahul Nair

Co-Founder & Head of Engineering

Engineering

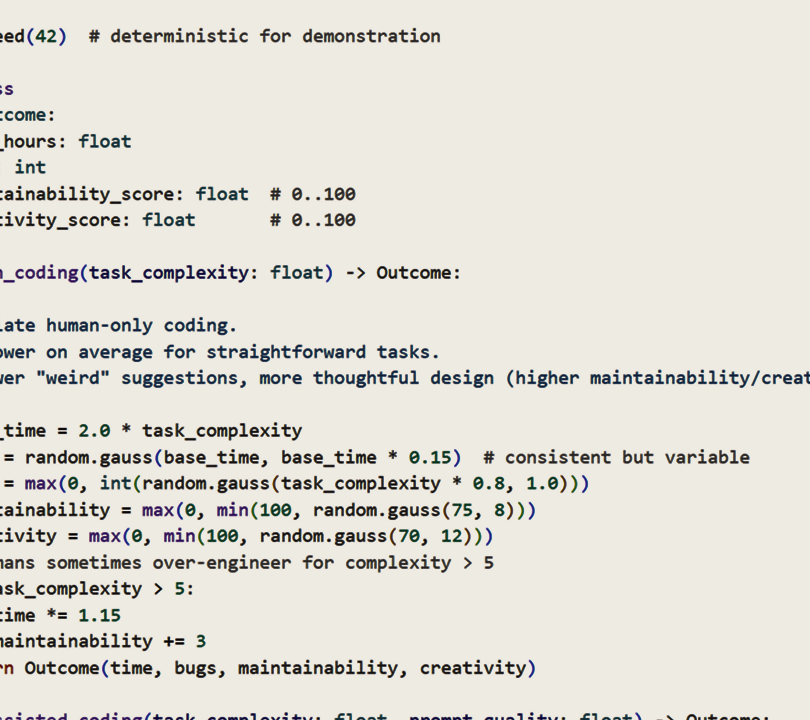

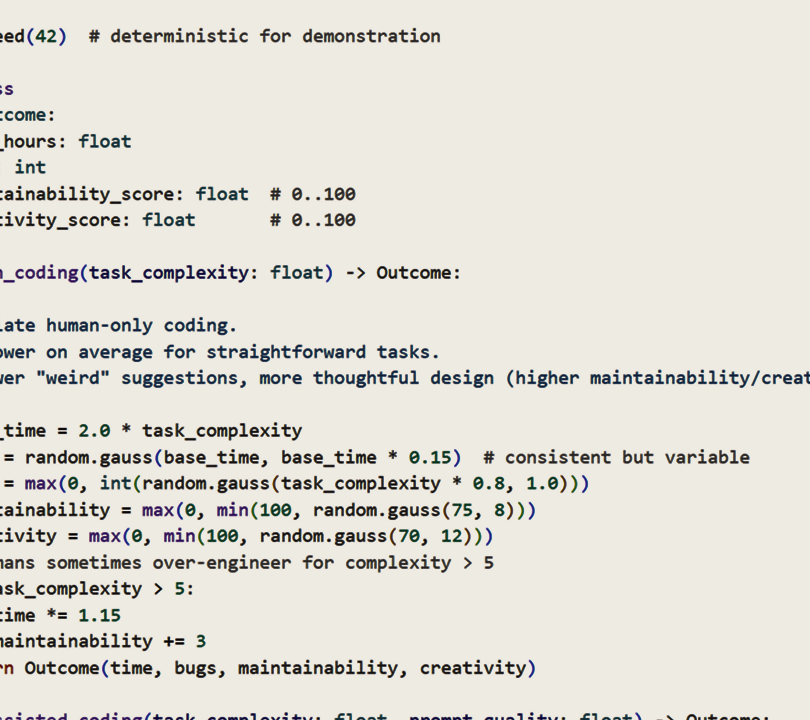

Vibe coding gets you to a demo fast. It rarely gets you to production. Here's what breaks between the two — and how to fix it.

Rahul Nair

Co-Founder & Head of Engineering

Engineering

AI coding tools have genuinely changed what's possible for small engineering teams. But they've also created new failure modes that weren't possible before.

Ajay Kumar

Co-Founder & Director

LET'S TALK

We set up the testing infrastructure so your engineers can keep building without breaking things.